Humans are able to rapidly understand scenes by utilizing concepts extracted from prior experience. Such concepts are diverse, and include global scene descriptors, such as the weather or lighting, as well as local scene descriptors, such as the color or size of a particular object. So far, unsupervised discovery of concepts has focused on either modeling the global scene-level or the local object-level factors of variation, but not both. In this work, we propose COMET, which discovers and represents concepts as separate energy functions, enabling us to represent both global concepts as well as objects under a unified framework. COMET discovers energy functions through recomposing the input image, which we find captures independent factors without additional supervision. Sample generation in COMET is formulated as an optimization process on underlying energy functions, enabling us to generate images with permuted and composed concepts. Finally, discovered visual concepts in COMET generalize well, enabling us to compose concepts between separate modalities of images as well as with other concepts discovered by a separate instance of COMET trained on a different dataset.

Video

The trouble with β-VAE, MONET & Co.

Unsupervised discovery of visual concepts has achieved great success, and has seen applications in reinforcement learning and planning. Despite this, existing unsupervised decomposition methods are, to date, limited in their capabilities. One underlying weakness is that they are often tailored to the particular type of concept under consideration, with approaches such as β-VAE excelling at representing global factors of variation, and other approaches such as MONET excelling at representing object factors of variation. Furthermore, inferred concepts in either case do not generalize well. While humans are able to combine concepts learned from one experience with those from another - there does not exist an way to combine a concept inferred from one dataset with another concept inferred from a separate dataset.

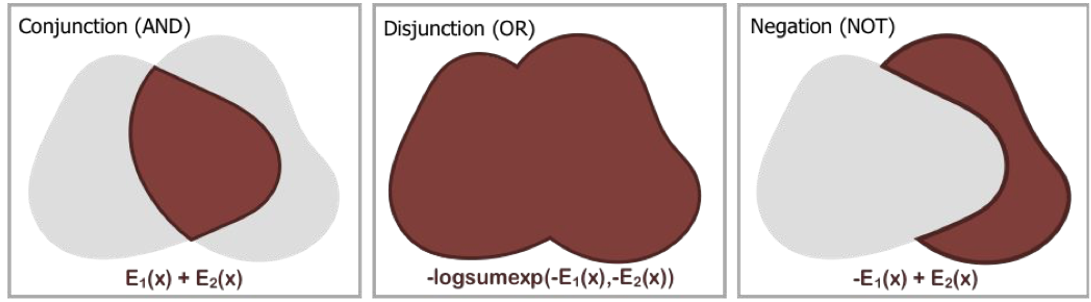

Composable Energy Network

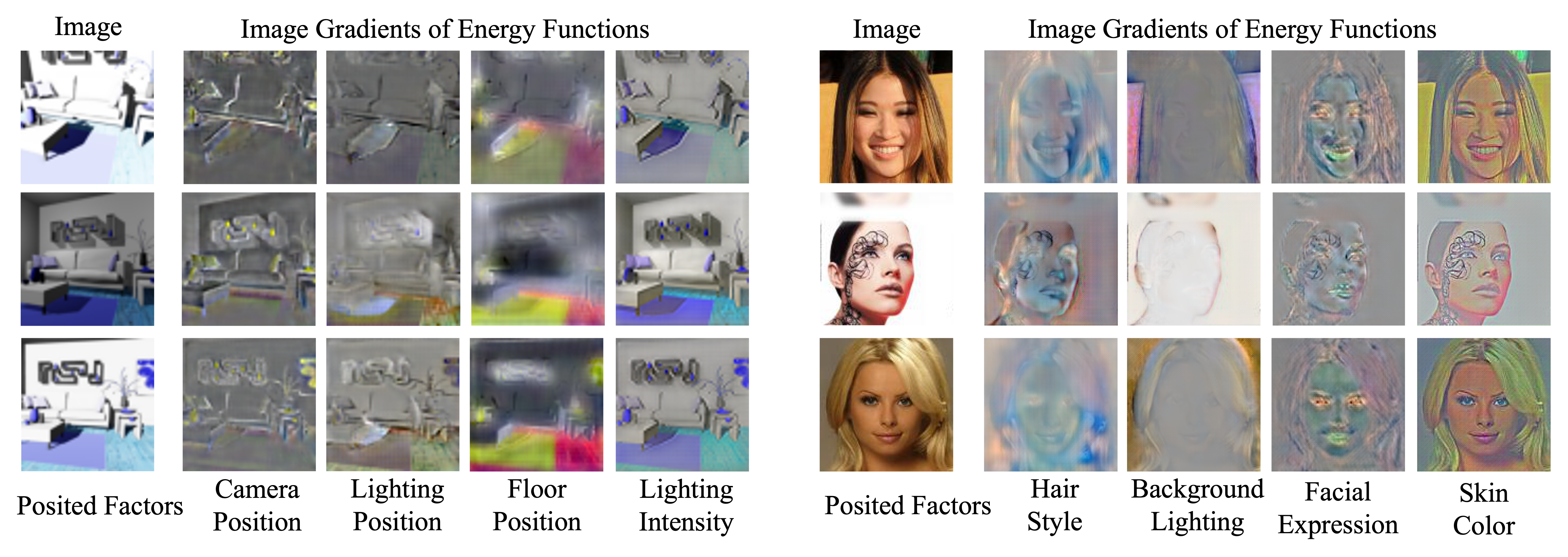

We introduce Composable Energy Network, or COMET, which overcomes these difficulties. COMET discovers and represents visual concepts as a set of composable energy functions. Each individual inferred energy function defines a separate minimal energy state which represents either a global or local factor of variation. Multiple energy functions are then composed together through summation to define a new minimal energy state consisting of each specified factor of variation. Individual composed energy functions generalize well and can be composed with energy functions inferred by other COMET models on disjoint datasets. First, we illustrate the ability of COMET to capture global factors of variation. Below, we illustrate the gradients of each energy function with respect to an image, indicating aspects of an image an energy function pays attention to. We further label each energy function with a posited global factor through visual inspection.

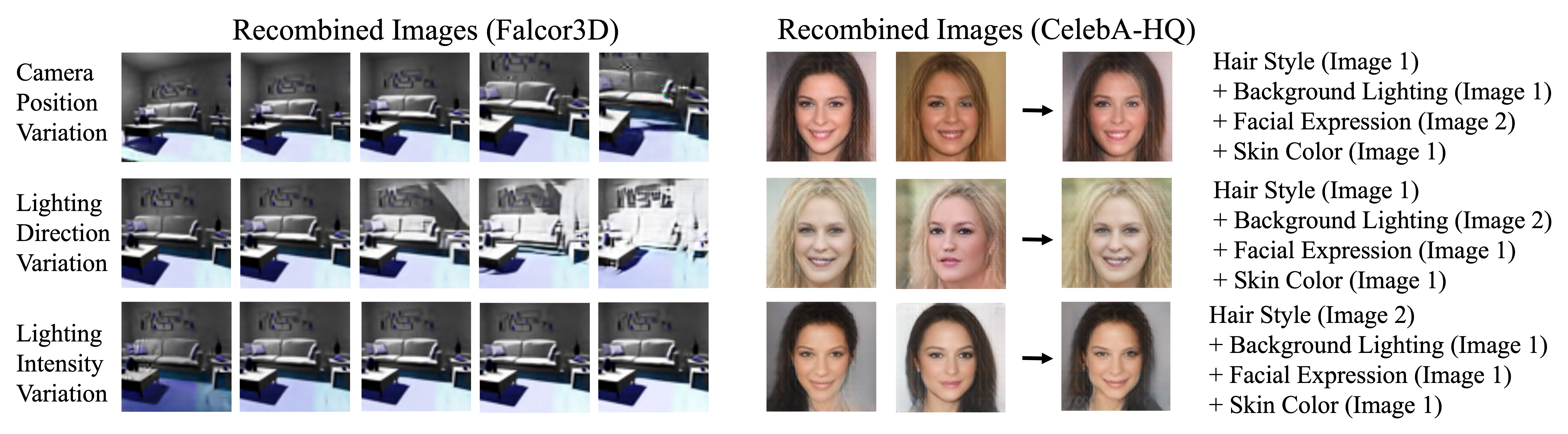

We may further recombine individual energy functions discovered in both of the above domains together.

Object Level Decomposition

Decomposed energy functions may also represent local object factors of variation. Below, we illustrate how COMET enables us to decompose and recombine energy functions representing both local and global factors of variation simultaneously, as well as compose multiple instances of objects together at once.

Compositional Generalization

We find that inferred energy functions from COMET can further generalize well. In the main paper, we illustrate how we may compose energy functions across separate modes of data. Below, we illustrate how we can combine energy functions from one COMET model trained on one dataset with energy functions from another COMET model trained on a separate dataset.

Related Projects

Check out our related projects on compositional energy functions!